AI: The Evolution and Its Impact on Industry

Over the past year, the AI (artificial intelligence) and ML (machine learning) space has seen significant advancements. From the release of new open-source models by companies like Meta and Mistral to the discovery of innovative applications for AI/ML in various industries. The pace of progress has been remarkable.

Since OpenAI made its ChatGPT model publicly accessible in September 2022, Generative Pre-trained Transformers (GPTs) have become a part of everyday life for many. The hype around AI has only grown since then, and two years on, we’ve witnessed substantial improvements in the capabilities of models. Especially those released by major players like OpenAI (ChatGPT Opus), Anthropic (Claude 3.5), and Google (Gemini). While these models are already useful for day-to-day tasks such as writing emails, checking grammar, creating documents, or summarizing articles, their true potential for industry lies in their ability to leverage proprietary data to answer specific, process-related questions.

Open Source Models and their Advantages

Companies like Meta, Mistral, and Qwen have released open-source models, including their weights. This development is significant for two reasons. Firstly, you can run these models privately on your own hardware—some on devices as small as a mobile phone. Secondly, these models can be fine-tuned using your own data, making them highly adaptable to specific business needs.

Understanding Context Windows

Before you dive into how to customize these models, you should first understand the concept of a context window. A context window refers to the amount of data you can input into a large language model (LLM) before it begins to “forget” earlier information. For example, ChatGPT has a context window of around 128k tokens (approximately 51,000 words). This means you can upload a large document and ask questions about its content. However, as the conversation progresses, the model may stop tracking earlier details once it exhausts the context window.

Fine-Tuning v Retrieval-Augmented Generation (RAG)

There are two primary methods to overcome the limitations of context windows and tailor LLMs to specific business needs: fine-tuning and retrieval-augmented generation (RAG).

1. Fine-Tuning:

Fine-tuning involves training an open-source model, such as Llama 3 70B, on your proprietary data. For example, your company’s operating procedures or work instructions. While this process is computationally expensive, it results in an LLM that can not only converse with you but also answer questions and make decisions based on your specific business processes.

2. Retrieval-Augmented Generation (RAG)

RAG, on the other hand, acts like a database that the LLM can query when answering questions. This approach is particularly useful if your data is continuously growing, as it eliminates the need for repeated fine-tuning sessions. The choice between fine-tuning and RAG depends on your specific application and requirements.

Industry Adoption of AI

Leading companies are already harnessing the power of AI. For instance, Siemens has begun using open-source LLMs for internal development, as highlighted in their blog (https://blog.siemens.com/2024/04/open-source-llms-for-everyone/). Similarly, the pharmaceutical industry is leveraging AI, with Siemens’ support, to accelerate drug discovery and optimize process development, as discussed in this article (https://blog.siemens.com/2024/09/the-transformative-power-of-ai-and-digital-twins-in-the-pharmaceutical-industry/).

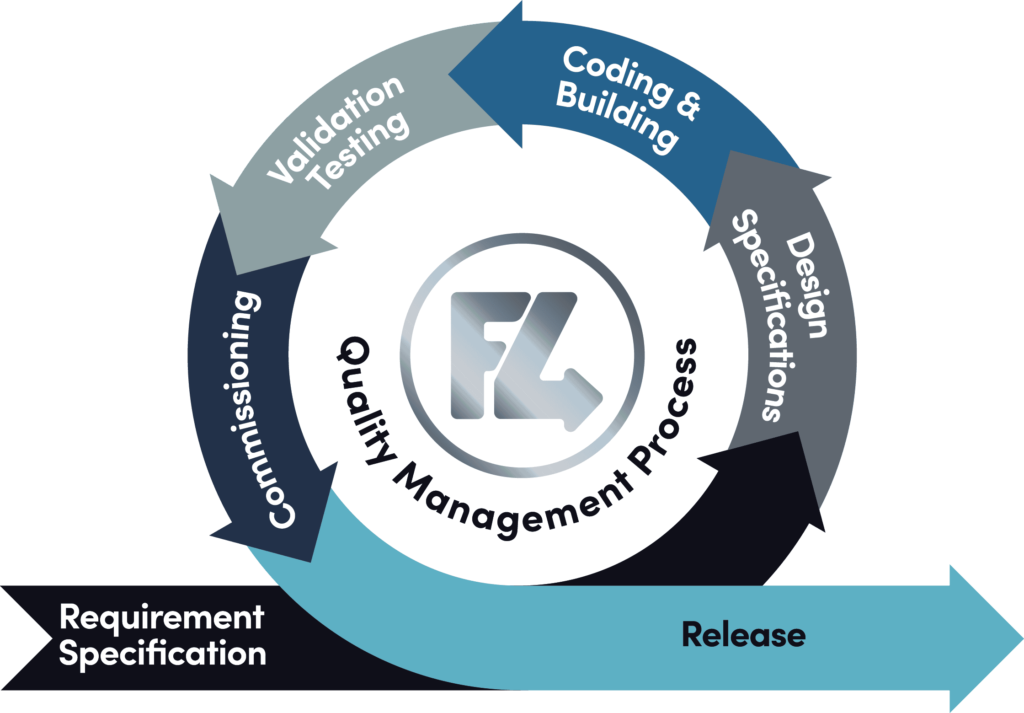

How Feed4ward uses AI

At Feed4ward, we’ve integrated AI into our processes to enhance efficiency. For example, we use the Deepseek V3 LLM to identify and label photos of electrical control boxes based on serial numbers. These images are then automatically attached to the corresponding production records in our SAP B1 ERP system using workflow automation.

Another application involves using OpenAI’s Whisper LLM to transcribe and diarize (separate by speaker) recordings of in-person meetings. We then run these transcriptions through an LLM to generate summaries and identify actionable items.

Conclusion

The rapid advancements in AI and ML over the past two years have transformed how businesses operate. From open-source models that can be fine-tuned to suit specific needs to innovative techniques like RAG, the possibilities are vast. Companies like Siemens and Feed4ward are already reaping the benefits, using AI to streamline processes, improve decision-making, and drive innovation.

As AI continues to evolve, its potential to revolutionize industries will only grow. Whether through fine-tuning models for specialized tasks or leveraging RAG for dynamic data integration, businesses that embrace these technologies will be better positioned to thrive in an increasingly competitive landscape. The future of AI is here, and it’s time to harness its power.

If you enjoyed reading our blog on the evolution and impact of AI, why not check out our other articles such as The AI Revolution in Pharmaceutical Manufacturing.